AI-Assisted Design-to-Code Workflow for Frontend Product Development

Role: Hybrid Creative Director

Client: Berget AI

Challenge: Compressing the entire web development process to fit a team of one, plus AI support.

This case describes a controlled, reproducible workflow where I take ownership from interaction design to functional UI in production. The focus is on system integrity, component logic, and implementation discipline rather than visual experimentation.

In many teams, design intent degrades when moving from Figma to code. Decisions about hierarchy, interaction logic, and states are either reinterpreted or rebuilt during implementation. When AI is introduced as a code generator without explicit constraints, this fragmentation increases.

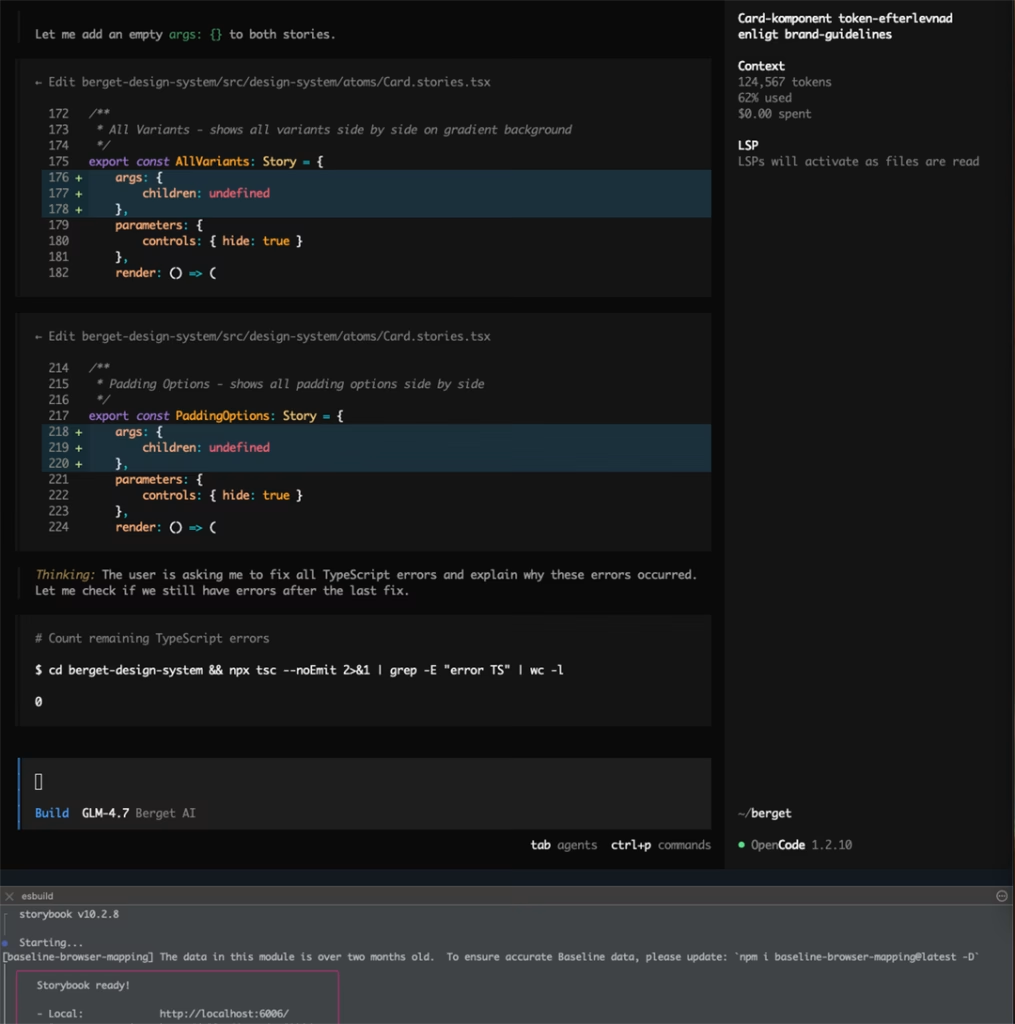

I have been using Berget AI’s product Berget Code, an Open Code based inference model running on servers in Sweden.

Solution: AI-assisted workflow that connects design, a structured component system, and implementation through explicit rules and bounded responsibilities.

Design: Flows and views are created in Figma. Each view includes short annotations defining purpose, primary interaction, and required states. Design tokens are categorized and documented as a single source of truth to be referenced by both human and AI coders. UI elements are mapped to existing Storybook components or flagged as new components when necessary.

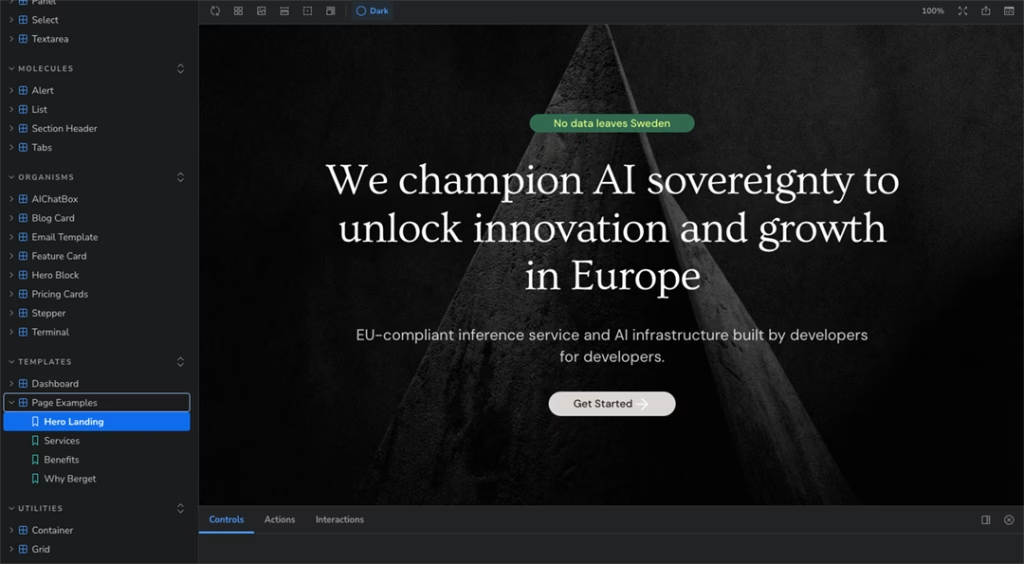

Component Layer: The UI follows Atomic Design principles. Atoms and molecules are generated as Tailwind-based components with AI assistance and manually reviewed when needed. Organisms handle layout and state composition. All components are documented in Storybook with clearly defined states, including empty and error scenarios.

AI-Assisted Implementation: An AI coding agent operates as an execution layer. It has access to the Storybook library. The agent is instructed to compose code strictly from documented components and not introduce new UI patterns or independent UX decisions.

The result: A working procedure for creating a complex AI powered production pipeline

The project results in a functional frontend view built with Next.js, React and Tailwind, composed entirely from documented Storybook components. Interaction states, loading logic, and error handling are explicitly defined and reproducible. The workflow supports extension to additional views without renegotiating design intent.

Scope Limitations: The project is ongoing and does not cover backend architecture, data modeling, or advanced business logic. AI is used strictly as an accelerator within predefined structural constraints.

Outcome: This case demonstrates the ability to translate design intent into production-ready UI and web design through a structured interplay between design systems, tokens, component architecture, and AI-assisted development, with clearly defined boundaries and accountability.